“Every time a person runs a Google search, watches a YouTube video or sends a message through Gmail, the company’s data centers full of computers use electricity. Those data centers around the world continuously draw almost 260 million watts — about a quarter of the output of a nuclear power plant.” — “Google Details, and Defends, Its Use of Electricity,” The New York Times

It’s not a well-kept secret: immense data centers, the lifeblood of business and consumer technology networks, consume a huge amount of energy. Today, we wanted to take a look at one of the world’s largest operators of data centers, Google, and its parent corporation, Alphabet.

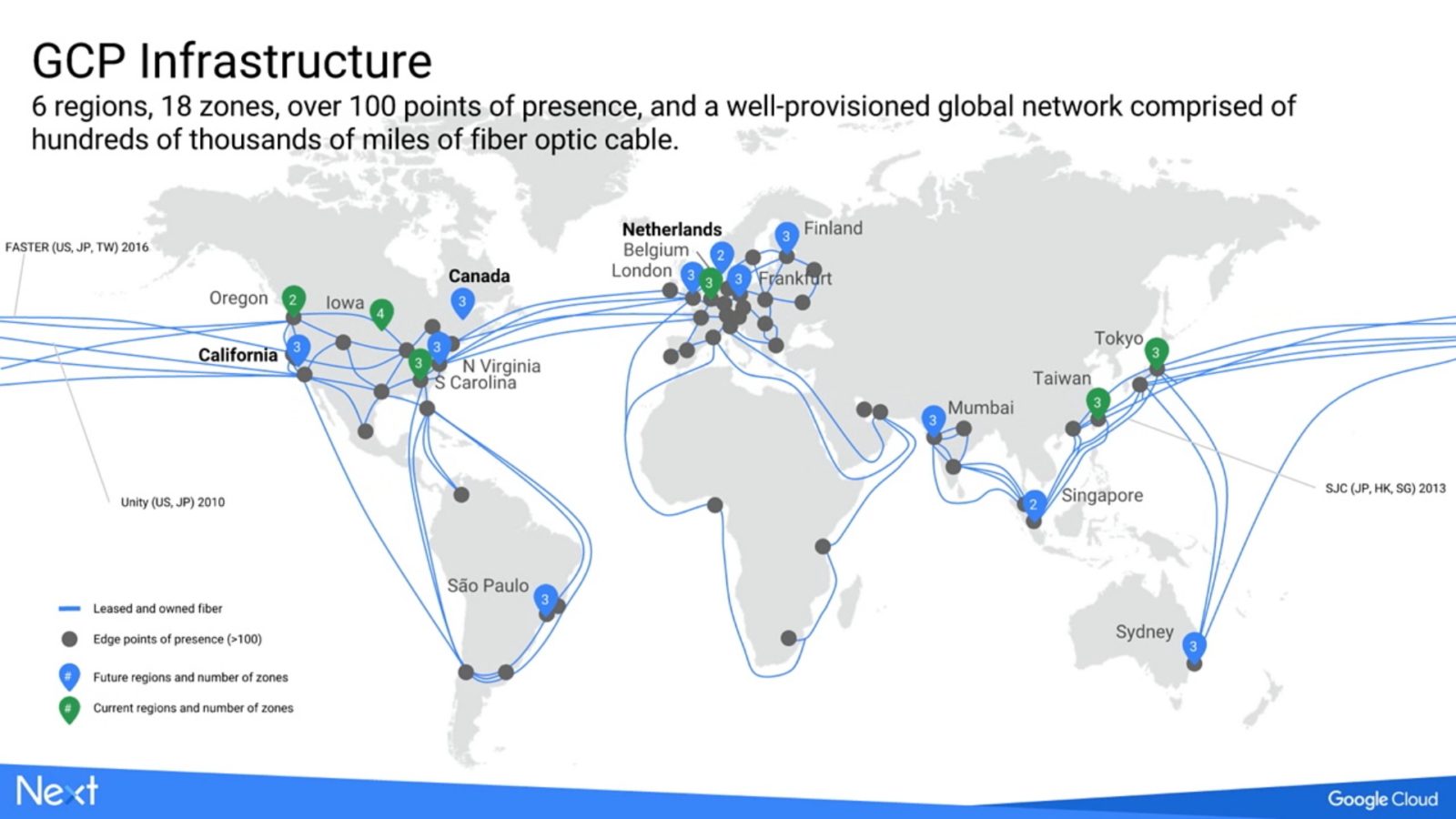

Google’s Cloud Platform Infrastructure

According to Data Center Knowledge:

“Google has placed a lot of focus (and dedicated a lot of resources) to selling cloud services to enterprises, going head-to-head against the market giant Amazon Web Services and the second-largest player in the space, Microsoft Azure … While the company already has what is probably the world’s largest cloud, it was not built to support enterprise cloud services. To do that, the company needs to have data centers in more locations, and that’s what it has been doing, adding new locations to support cloud services and adding cloud data center capacity wherever it makes sense in existing locations.”

While the company is one of the world’s largest creators/users of renewable energy, that’s a lot of power, both in aggregate and as a single entity. Large cloud companies take extraordinary care to optimize their power usage in data centers. According to its most recent data, Google’s most efficient data center, based on PUE (power-usage effectiveness, that is, the percentage of power that goes to actual computing rather non-computing uses within the data center), is in St. Gislain, Belgium. With a PUE of 1.09, for every watt used for computing, only .09 is used for non-computing needs, such as cooling and power conversion.

Yet even the MOST efficient data center uses a tremendous amount of power. While exact figures are difficult to come by, one researcher pegged Google’s overall energy use at 3.2GW. Using rough numbers, on average, with 15 data centers worldwide, each uses 213 MW of power per year.

Demand for bandwidth continues to grow

Today’s cloud datacenters are transitioning their server interfaces from 10Gbps to 25Gbps. The transition to 50Gbps server interfaces is anticipated within the next 2-3 years. This drives the need for higher capacity switches and optical interfaces both inside and between datacenters. As the increased need for power cannot continue apace, alternative solutions are necessary.

Next-generation networks are adopting digital signal processing techniques designed to overcome physical impairments in optical fibers at higher data rates. This signal processing is implemented in CMOS, taking advantage of advanced process nodes to implement low power solutions. Moving to smaller process nodes allows both higher capacity interfaces and lower power implementations. Moore’s Law predicts that the density of transistors on an integrated circuit doubles every 2 years. Along with increased density, these smaller geometries may result in lower power.

The Acacia advantage

Acacia has specialized in making extremely power efficient optical connectivity solutions. By optimizing the design of both the digital signal processor (DSP) and silicon photonics, as well as developing highly optimized algorithms for features such as Forward Error Correction (FEC), Acacia has been able to reduce the power consumed by a coherent optical interconnect by as much as 85% in the last 5 years. Acacia’s awarding winning CFP2 is continuing that trend by helping our network equipment partners to deliver new levels of power efficiency in a pluggable coherent optical interface.